There’s a familiar arc in AI development. A team builds a model, wires up a pipeline, and ships it. It works. In the demo, it’s fast. Features arrive cleanly, predictions feel fresh, vector search returns sensible results. Everyone is happy.

Then production happens.

Latencies spike unpredictably. Features arrive stale. The vector index that performed beautifully at 100K records starts degrading at 10M. The system that hummed in development begins to wheeze under real load. The model hasn’t changed. The accuracy metrics still look fine. But the system is struggling — and accuracy is no longer the only thing that matters.

This post is about what comes after model accuracy: the infrastructure concerns that determine whether your real-time AI actually works in production at scale.

The Gap Between Dev and Prod

Most ML pipelines are designed around a happy-path assumption: data is clean, features are fresh, requests arrive at a manageable pace, and the compute resources you provisioned are enough. These assumptions hold in development. They rarely hold at scale.

The production environment introduces three categories of pressure that expose architectural weaknesses:

1. Load variability. Traffic is never flat. Real-world AI workloads spike — product launches, viral events, end-of-quarter reporting rushes, user behavior patterns tied to time zones. A pipeline that performs at P50 doesn’t guarantee acceptable behavior at P99. And P99 is where your users live when things go wrong.

2. Data velocity. Features go stale. The world changes faster than batch refresh cycles. For recommendation systems, fraud detection, personalization engines, and anything that depends on recent behavioral signals, a feature value that’s 15 minutes old can be meaningfully worse than one that’s 15 seconds old. The gap between feature generation and model consumption is a direct contributor to prediction quality degradation.

3. Index drift. Vector search is not a set-it-and-forget-it operation. As your embedding space grows and evolves — new documents, updated products, revised knowledge bases — the indices that power semantic search require continuous maintenance. Approximate Nearest Neighbor (ANN) indices in particular degrade in relevance and response time as the data distribution shifts underneath them.

Understanding these three pressures is the first step toward designing systems that survive them.

What “Real-Time” Actually Requires

“Real-time AI” is an overloaded term. Before you can design for it, you need to be precise about what it means in your context. There are at least three meaningful tiers:

- Near-real-time (seconds to minutes): Acceptable for many analytics, batch recommendation refreshes, and reporting use cases.

- Low-latency (sub-second): Required for interactive recommendation, search, and user-facing personalization.

- Streaming real-time (milliseconds): Required for fraud detection, financial trading signals, and reactive safety systems.

Each tier demands different architectural choices. A feature store that works beautifully for near-real-time refreshes may be completely unsuited for millisecond-latency inference. The first architectural question to ask isn’t “how do we get features?” — it’s “what does real-time actually mean for this workload?”

Once you’ve answered that, you can reason about the pipeline design.

The Three Architectural Pillars

1. Feature Freshness: Designing for the Speed of Your Signal

The feature pipeline is where most latency and staleness problems originate. There are two broad architectures:

Batch feature pipelines compute features on a schedule — hourly, daily, or on-demand — and write them to a feature store. They’re operationally simple and cost-efficient. They’re also structurally incapable of delivering fresh signals for low-latency workloads.

Streaming feature pipelines compute features continuously as events arrive, using frameworks like Apache Kafka, Apache Flink, or Spark Structured Streaming. They’re more complex to build and operate, but they’re the only viable path when your model needs to reason about what happened in the last 30 seconds.

The practical reality is that most production systems need both. A Lambda architecture pattern — combining batch for historical aggregates with streaming for real-time signals — gives you the freshness of streaming where it matters without abandoning the reliability and richness of batch-computed features.

Key design decisions in feature pipelines:

- Point-in-time correctness: Features used for training must reflect what the system would have known at the moment of prediction — not values computed with hindsight. Failure to enforce this introduces training-serving skew, one of the most insidious sources of silent model degradation.

- Backfill capability: Can your streaming pipeline reconstruct historical features when you retrain? Architectures that can’t backfill trade away long-term flexibility for short-term simplicity.

- Feature reuse: The same feature — a user’s 7-day purchase count, for example — is often needed by multiple models. Centralizing feature computation prevents redundant infrastructure and inconsistent definitions across teams.

2. The Feature Store: Consistency and Latency at Scale

A feature store is the operational hub of a real-time ML system. It serves as the bridge between feature computation (where data scientists live) and model inference (where production systems live). Getting its design right has outsized consequences.

The central tension in feature store design is between consistency and latency. Achieving both simultaneously at scale is genuinely hard.

The dual-store pattern is the most widely adopted solution. It separates storage into two layers:

- An online store — typically an in-memory or low-latency key-value store — serves features at inference time. Reads must be fast, often sub-millisecond. The tradeoff is cost: fast storage is expensive, so online stores typically hold only the most recent feature values.

- An offline store — typically a columnar data warehouse — serves training pipelines, batch scoring, and historical analysis. Reads are slower but the storage cost is orders of magnitude lower.

A write path synchronizes values between the two stores as new features are computed.

Consistency pitfalls to design against:

- Training-serving skew: If the offline store and online store derive features differently — even slightly — your model is trained on data that doesn’t match what it sees in production. This is silent and difficult to detect.

- Schema drift: Features evolve. Adding a new feature, changing a transformation, or retiring a deprecated one all require careful version management. Feature stores without explicit schema governance accumulate technical debt that eventually manifests as production incidents.

- Cold start: When a new entity (a new user, a new product) arrives with no feature history, what does the model see? Null-handling and default value strategy belong in the feature store design, not as afterthoughts.

Access pattern design:

Feature retrieval for inference often involves batch point lookups — fetching dozens of feature values for a single entity across multiple feature groups simultaneously. The data model and indexing strategy of your online store must be optimized for this access pattern, not for the range scans and aggregations that suit an offline analytical store.

3. Vector Search at Scale: Maintaining Performance Under Continuous Change

Vector databases and ANN search have moved from research curiosity to production infrastructure in a remarkably short time. They’re now central to RAG (Retrieval-Augmented Generation) pipelines, semantic search, recommendation systems, and multimodal applications. And they introduce a class of operational problems that most teams underestimate.

The index degradation problem

ANN indices — HNSW, IVF, and their variants — are built for approximate search speed, not for correctness under mutation. They’re typically optimized at build time for a specific data distribution. As you add, update, and delete vectors continuously, several things happen:

- Recall degrades: The approximation quality drops as the index structure diverges from the actual data distribution.

- Latency increases: More nodes are traversed during search as the graph structure becomes less optimal.

- Tombstone accumulation: Deleted vectors that aren’t fully purged create phantom results and slow index traversal.

The naive solution — periodic full index rebuilds — introduces its own problems: rebuild latency, resource contention during the rebuild window, and the risk of serving stale or inconsistent results during transitions.

More sophisticated approaches include:

- Incremental indexing: Adding new vectors to the live index rather than rebuilding from scratch, trading some approximation quality for operational continuity.

- Segment-based architectures: Maintaining multiple smaller index segments that are merged periodically, similar to how LSM-tree databases manage compaction. Fresh vectors land in small, easily-rebuilt segments; cold vectors live in stable, large segments.

- Recall monitoring: Treating recall as an operational metric — not just a benchmark number — and triggering maintenance operations when it drops below acceptable thresholds.

Filtering and hybrid search

Production vector search is rarely pure semantic similarity. Real workloads layer metadata filters on top of vector similarity: find the most relevant product in a user’s country, find the most similar document within a specific category, find semantically related customers above a revenue threshold.

Pre-filtering and post-filtering strategies have meaningfully different performance and correctness profiles. Pre-filtering (restricting the candidate set before ANN search) is faster but can miss relevant results if the filter is highly selective. Post-filtering (running ANN search broadly, then applying filters) is more complete but wastes compute. The right approach depends on your data distribution and selectivity characteristics — and it needs to be a deliberate architectural choice, not a default.

A Framework for Evaluating Your Pipeline

Before committing to architectural decisions, it’s worth stress-testing your current or planned design against these questions:

On feature freshness:

– What is the maximum acceptable age of each feature at inference time?

– Do you have a streaming path for high-velocity signals?

– Is training-serving skew actively monitored?

On the feature store:

– Can you retrieve all features for a single inference request in a single round-trip?

– Is your schema versioned and your transformation logic reproducible?

– What happens when a new entity arrives with no feature history?

On vector search:

– Do you track recall as a production metric?

– How do you handle index updates without full rebuilds?

– Is your filtering strategy validated against your actual query distribution?

On the system as a whole:

– What is your P99 latency SLA, and have you load-tested to it?

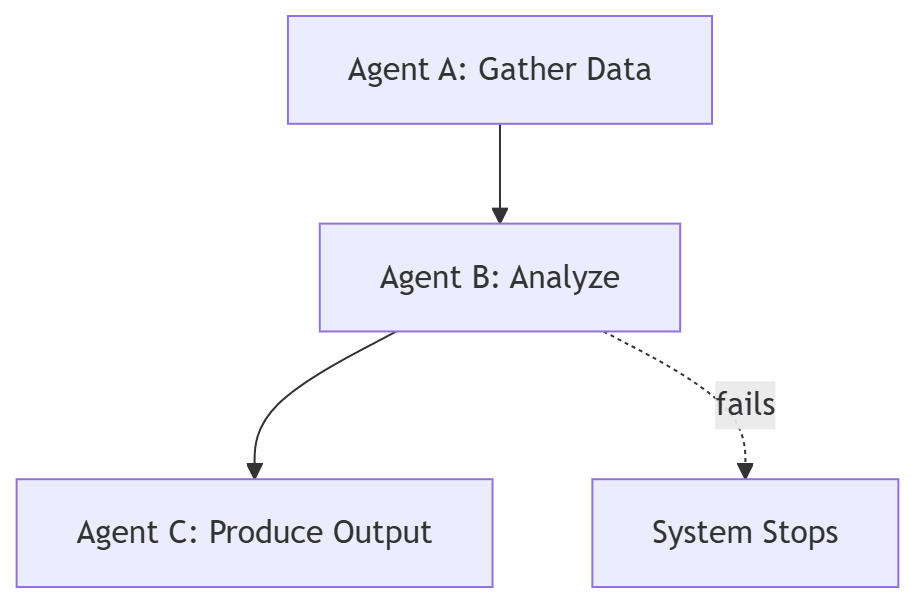

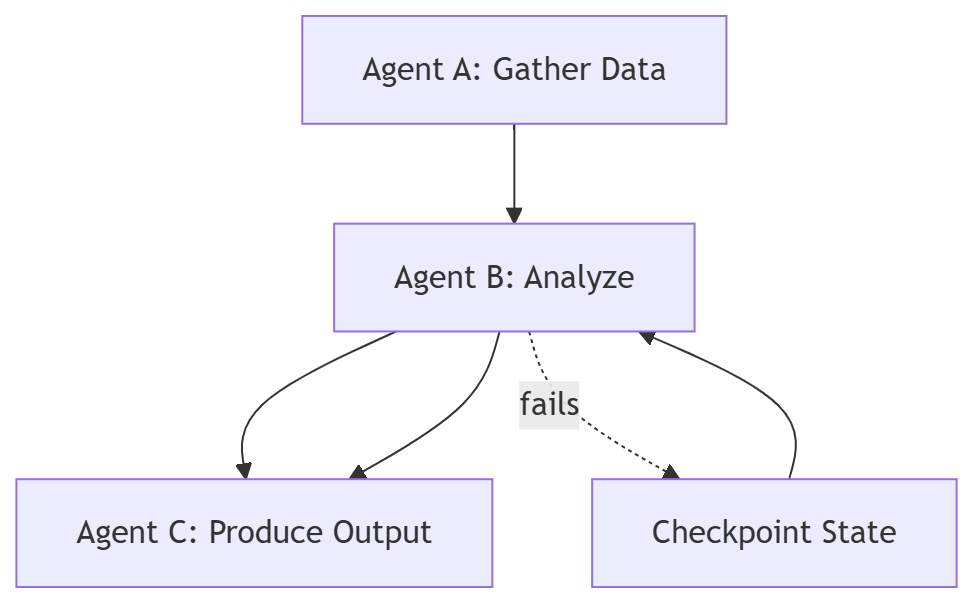

– Where are your single points of failure?

– Can you replay or backfill features and embeddings if a component fails?

These aren’t hypothetical questions. Each one corresponds to a category of production incident that real teams have encountered when real-time AI systems scaled beyond their original design envelope.

The Shift in Mindset

Scaling real-time AI infrastructure requires a shift in how engineering teams think about the problem.

In early development, the model is the system. Accuracy is the primary metric. Everything else is scaffolding.

At scale, the pipeline is the system. The model is one component — important, but dependent on everything that surrounds it. Latency, freshness, consistency, and recall become first-class engineering concerns, tracked with the same rigor as model performance metrics.

The teams that make this transition successfully are the ones that start treating their feature pipelines, feature stores, and vector indices not as data infrastructure afterthoughts, but as the production systems they actually are — with SLAs, observability, capacity planning, and failure modes worth designing against from the start.

Real-time AI at scale is harder than it looks. But it’s not mysterious. The problems are identifiable, the architectural patterns are well-understood, and the path forward is clear once you’re asking the right questions.

This post is part of an ongoing series on building production-grade AI systems. If you found this useful, consider sharing it with a teammate who’s hitting these problems for the first time.

When Your AI Pipeline Grows Up

- Real Time AI At Scale – This Post.

- Feature Freshness – Coming 13 May 2026

- Feature Store – Coming 20 May 2026

- Vector Search – Coming 27 May 2026

- Operations – Coming 3 June 2026