The Local Brain — First Light

A vault of 3,150 Markdown files is just a very organized digital attic. It’s a repository of every conversation, code snippet, and research rabbit hole I’ve navigated with AI over the last two years, but until now, it was static. It was “organized,” but it wasn’t intelligent. To find a specific Movesense API call or a forgotten patent date, I still had to know which box I put it in.

Today, we turn the key. We are moving from mere storage to a private, semantic intelligence estate.

The Engineering Leh Sigh

I call the struggle to reach this point the Leh sigh, that weary, familiar breath you take when a “simple” task reveals its hidden fangs. On paper, building a local semantic search is easy: pick a database, call an embedding API, and save. In reality, it was a 33-iteration battle against the “Last 10%” of systems engineering.

We hit the Context Wall, where massive technical logs crashed the safety limits of our embedding models, forcing us to rethink how we slice data. We fought Zombie Indices, where stale data from old file versions haunted search results, leading us to implement atomic “Delete-before-Upsert” indexing. And we survived a Telemetry Crisis where the database engine tried so hard to “phone home” to its developers that it repeatedly crashed the CLI, requiring a surgical strike to silence the internal trackers.

The Coordinate Map of Thought

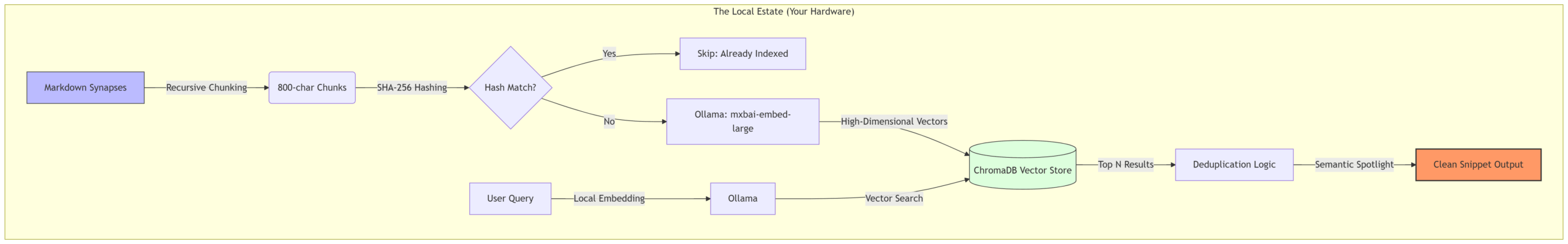

To solve these, we built a stack that prioritizes integrity over ease. The centerpiece is Ollama, running the mxbai-embed-large model locally. This is the engine that translates human thought into high-dimensional coordinates.

To ensure no idea was ever cut in half by the model’s token limits, we implemented a sliding window for our data. Before a single vector is saved, the Scribe slices the text into 800-character segments with a 150-character semantic overlap.

def _chunk_text(text: str) -> list[str]:

"""Split text into chunks of CHUNK_SIZE chars with CHUNK_OVERLAP."""

if not text.strip():

return []

if len(text) <= CHUNK_SIZE:

return [text]

chunks: list[str] = []

start = 0

step = max(1, CHUNK_SIZE - CHUNK_OVERLAP)

while start < len(text):

chunk = text[start : start + CHUNK_SIZE]

if chunk.strip():

chunks.append(chunk)

start += step

return chunks

When a synapse is indexed, we now compute a truncated 16-character SHA-256 content fingerprint hash to serve as our lightweight data-drift indicator. The Scribe is self-aware; if a file hasn’t changed, the system doesn’t waste a single CPU cycle re-processing it. If it has changed, we trigger an atomic update: the old “memories” are wiped, and the new ones are written only if the entire process succeeds. It is all or nothing.

The Payoff: Semantic Spotlight

The result is what I call “First Light”—the moment the machine actually understands the intent of a query. By searching across what has now become 12,400 semantic chunks, the Scribe pulls the needle from the haystack in under three seconds.

# Querying two years of research in 2_The_Prose_Tax.8_Forensic_Receipt seconds

python3 main.py query "Movesense calibration" --n-results 1

🔍 Top 1 match for: Movesense calibration

--- Result 1 ---

Timestamp: 2025-06-20 07:07

Snippet: It sounds like rolling my own would indeed be the best option, plus if I'm working

directly with therapists they might have some insights into what specific

information would be valuable for their clients...

File: vault/synapses/2025-06-20-0707-rolling-my-own-logic.md

This isn’t keyword matching. The system found this result because it understood the concept of building a custom calibration tool for clinical use, even though the word “calibration” only appeared in the broader file context.

The Sovereign Architecture

As the vault grows, the relationship between my data and my hardware becomes the ultimate bottleneck. By running embeddings on-device, my queries never leave the local network.

Privacy isn’t a setting; it’s the architecture.

Storing the index on a high-performance NVMe ensures that the “latency of thought” remains sub-second, even as the estate expands. The foundation is set: 3,150 synapses, 12,400 semantic vectors, and not a single byte sent to the cloud.

We have moved from a digital attic to a living cognitive estate, where the value of the data isn’t just in its existence, but in its accessibility.

But a brain that only remembers the past is just a library. To truly act as a collaborator, the Scribe needs to do more than find information—it needs to synthesize it. In Phase 2, we stop looking backward and start building the future. It’s time to let the Scribe talk back.

How do you handle the “digital attic” problem in your own workflow? Is your data working for you, or are you just storing it?

The Sovereign Synapse Series

- The Great Export

- The Context-Cleaner

- The Local Brain – This Post

- The Interactive Agent – Coming Soon